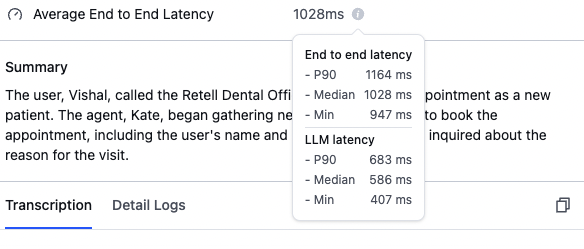

You can monitor the latency of individual calls in the “Call History” section.Documentation Index

Fetch the complete documentation index at: https://docs.retellai.com/llms.txt

Use this file to discover all available pages before exploring further.

Understanding latency metrics

End-to-end latency measures the total time from when the user stops speaking until the AI agent begins responding. This includes processing time, network delays, and model inference time.Key metrics explained

- P90 (90th Percentile): 90% of calls have latency below this value.

- Median (50th Percentile): Half of the calls have latency less than this value.

- Min: The fastest response time achieved in any call.

Retrieve latency via the API

You can also retrieve detailed latency breakdowns programmatically using the Get Call API. After a call ends, the response includes alatency object with per-component metrics.

Latency breakdown fields

Thelatency object contains the following components. Not all fields are present on every call — availability depends on the call type and features used.

| Field | Description |

|---|---|

e2e | End-to-end latency from when the user stops talking to when the agent starts talking. Does not account for network trip time from the Retell server to the user’s frontend. |

asr | Transcription latency — the difference between the duration of audio chunks streamed and the duration of the transcribed portion. |

llm | LLM latency from the start of the LLM call to the first speakable chunk received. When using a custom LLM, this includes the websocket roundtrip time. |

llm_websocket_network_rtt | Websocket roundtrip latency between your server and the Retell server. Only populated for calls using a custom LLM. |

tts | Text-to-speech latency from triggering TTS to the first audio byte received. |

knowledge_base | Knowledge base retrieval latency from triggering retrieval to receiving all relevant context. Only populated when the agent uses the knowledge base feature. |

s2s | Speech-to-speech latency from requesting a response to the first byte received. Only populated for calls using a speech-to-speech model (e.g., Realtime API). |

| Field | Type | Description |

|---|---|---|

p50 | number | 50th percentile (median) latency in milliseconds |

p90 | number | 90th percentile latency in milliseconds |

p95 | number | 95th percentile latency in milliseconds |

p99 | number | 99th percentile latency in milliseconds |

min | number | Minimum latency in milliseconds |

max | number | Maximum latency in milliseconds |

num | number | Number of data points tracked |

values | number[] | All individual latency data points in milliseconds |

Example response

Here is an example of thelatency portion of a Get Call response: