Documentation Index

Fetch the complete documentation index at: https://docs.retellai.com/llms.txt

Use this file to discover all available pages before exploring further.

Overview

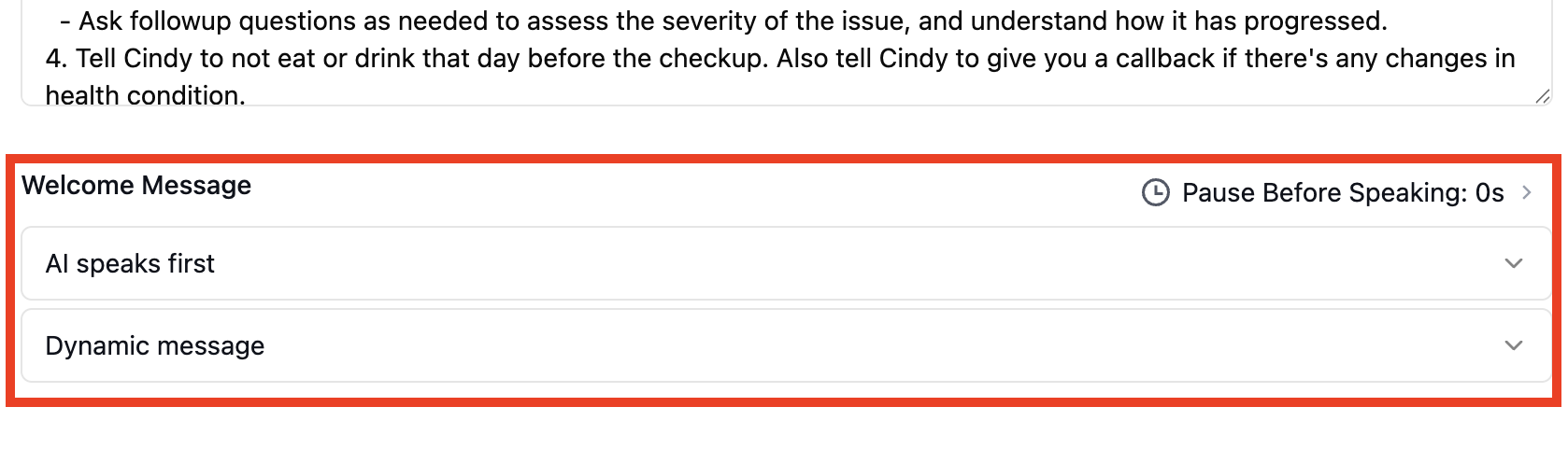

While we generally bill based on actual call duration, certain call characteristics may result in adjusted billing to ensure fair pricing for our services.Rule 1: Minimum Duration for Dynamic Opening Messages

When it applies: Calls shorter than 10 seconds that use dynamic opening messages when AI speaks first Billing adjustment: Minimum charge of 10 seconds

- Call duration: 6 seconds

- Dynamic opening messages: Enabled

- Billed duration: 10 seconds (4 seconds additional charge)

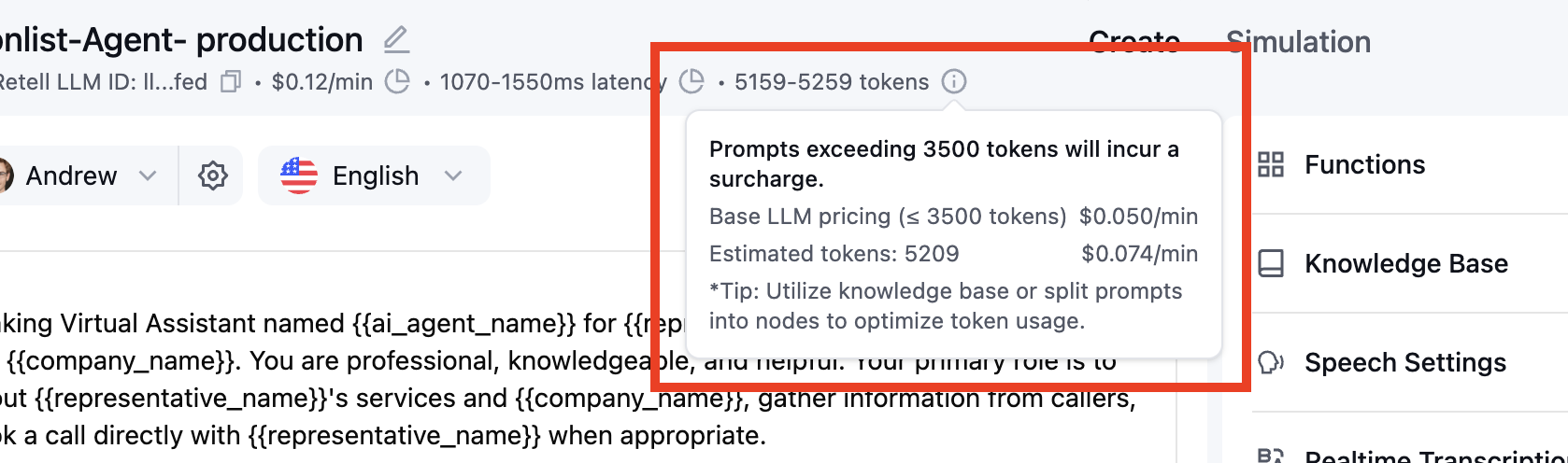

Rule 2: LLM Price Scaling for > 3,500 Token Prompt Length

When it applies: Agents that use more than 3,500 LLM tokens in their prompts Billing adjustment: Duration is scaled proportionally based on token usage What’s included in token calculation:- global prompt

- functions (tool descriptions)

- state / node prompt

- transcript between agent and user

- tool call history and results

Flex mode is a common trigger for this rule.

It compiles all node prompts, transitions, and tool descriptions into a single LLM

context, which can push the token count well above 3,500.

- Scaling Factor = Prompt LLM Tokens ÷ 3,500

- Billed Duration = Original Duration × Scaling Factor (rounded up)

- Call duration: 60 seconds

- LLM tokens used: 4,200

- Scaling factor: 4,200 ÷ 3,500 = 1.2

- Billed duration: 72 seconds (12 seconds additional charge)