Conversation node is the most commonly used node type in conversation flow. It’s used to have a conversation with the user without tool calling during the conversation. If you want the agent to talk to the user and call tools during the same node, use a Subagent Node. Please note that agent can have a multi turn conversation inside a single node, so you don’t necessarily need to create a new conversation node for every sentence the agent needs to say. It’s recommended to split node when there’s logic split, or the instruction got too long.Documentation Index

Fetch the complete documentation index at: https://docs.retellai.com/llms.txt

Use this file to discover all available pages before exploring further.

Write Instruction

Inside the node, you get to pick how you want to write the specific instruction for the agent to follow:- Prompt: Write a prompt for the agent to dynamically generate what to say.

- Static Sentence: Agent will say a fixed sentence first, and if later still inside this node, it will generate content dynamically based on the static sentence set.

When Can Transition Happen

- when user is done speaking

- when

Skip Responseis enabled and agent finishes speaking

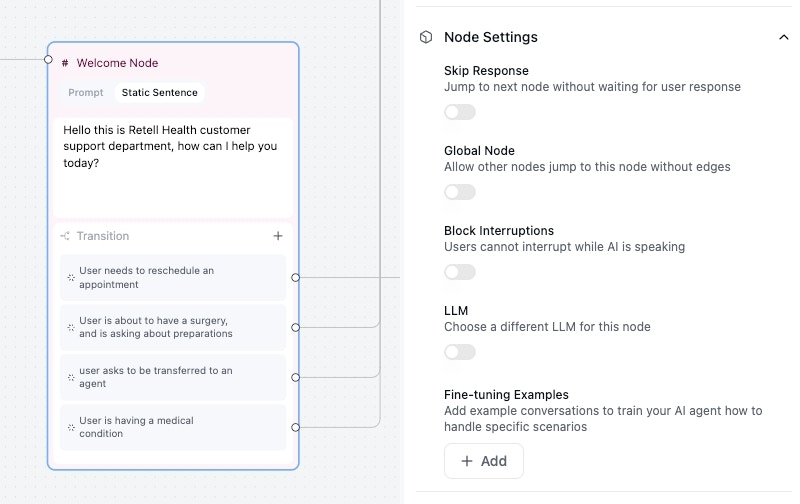

Node Settings

- Skip Response: when enabled, the transition will only have one edge that you can connect, and when agent is done talking, it will transition to the next node via that specific edge. This is useful when you want the agent to say things like disclaimers, where you don’t need a response to move on to another node.

- Knowledge Base: configure node-level knowledge bases to combine topic-specific knowledge with the agent-level knowledge base. Read more at Knowledge Base.

- Global Node: read more at Global Node

- Block Interruptions: when enabled, the agent will not be interrupted by user when speaking.

- LLM: choose a different model for this particular node. Will be used for response generation.

- Fine-tuning Examples: Can finetune conversation response, and transition. Read more at Finetune Examples